|

|

Exclusive Feature on Building the Tech Stack for the Future of Work |

Why Scaling AI in Operations Without a Control Plane is a Risk, Not a Strategy

Kumaran Shanmuhan Chief Strategy Officer, Firstsource

Here is the uncomfortable truth about AI in enterprise operations: the technology isn't the bottleneck. Governance is.

LLM-powered copilots, chatbots, and autonomous agents have proliferated at a remarkable speed. What hasn't kept pace is the infrastructure to govern them, “the control plane,” as I call it.

The control plane is a governance and execution layer that determines where decision authority lives, what evidence exists when something goes wrong, and how accountability is traced at the moment of execution rather than reconstructed afterward.

Without this layer, scaling AI doesn't accelerate operations. It scales risk.

This explains why a quieter sentiment keeps surfacing among operations leaders: "We like what we see in pilots. We're just not sure we trust it enough to scale."

The hesitation is rational. Deploying AI without a control plane isn't a strategy, but a liability.

A Pilot That Worked—Until It Didn't

I saw this dynamic play out recently in a review of an AI pilot that everyone agreed had "worked." The metrics looked promising, handle time was down, and agents found the tool helpful. Leadership was discussing rollout.

Then someone asked: "What happens when it gives the wrong answer?"

The room went quiet. Not because the question was unexpected, but because the answer revealed how little had changed. The fallback was the same one operations teams have relied on for decades: coaching, retraining, and quality checks after the fact.

We were evaluating AI as a productivity tool when the real requirement was governance.

This pattern is endemic. MIT researchers found that most AI pilots—by some estimates, north of 90 percent—never translate into measurable business impact [1]. The technology works; the governance infrastructure to support it doesn't exist.

This revelation is also changing what enterprises expect from their business process services partners: not just operational support, but the expertise to design and operate the governance layer that makes AI safe to scale.

Why Software Engineering Succeeded Where Operations Stalled

I’ve seen generative AI work exceptionally well in software engineering, well before copilots became a default talking point.

In one environment I was close to, teams used AI to accelerate iteration across the development lifecycle. Feature velocity increased, quality improved, and delivery timelines compressed. Not because standards were relaxed, but because feedback cycles became dramatically tighter.

That distinction matters. Software engineering didn’t succeed with AI because it adopted more intelligent models. It succeeded because it already had an operating system that governed execution.

When I look at operations, the contrast is stark. The work is no less complex, and the stakes are often higher, but the equivalent scaffolding rarely exists.

We ask AI to reason about policies and exceptions using documentation and training designed for humans, not systems—and then act surprised when trust becomes the limiting factor. This isn’t a failure of AI. It’s the absence of a control plane.

That absence explains why today’s most popular AI approaches, despite their strengths, stall when applied in the world of operations.

Why Today’s AI Approaches Share the Same Blind Spot

Enterprises typically adopt AI capabilities in sequence: first improving access, then grounding, then adding structure, and finally automating execution. Through that lens, the shared failure mode becomes obvious. None of these, by themselves, determine what is authorized at the moment of execution.

Each delivers clear value, yet none is sufficient for safely scaling AI in operations.

The pattern becomes clearer when viewed side by side:

What unites them is a shared failure mode: each asks tools to perform the work of an operating system. Reliability doesn't emerge from intelligence alone. It emerges from the governance infrastructure that determines how intelligence gets used.

For most enterprises, the control plane won’t emerge from technology alone. It depends on operational judgment, exception-handling experience, and systems thinking that partners embedded in day-to-day workflows have cultivated over the years.

Where Governance Gaps Hurt Most

The governance gap carries its greatest consequences in trust-critical operations—work where being wrong is costly, heavily regulated, or difficult to reverse.

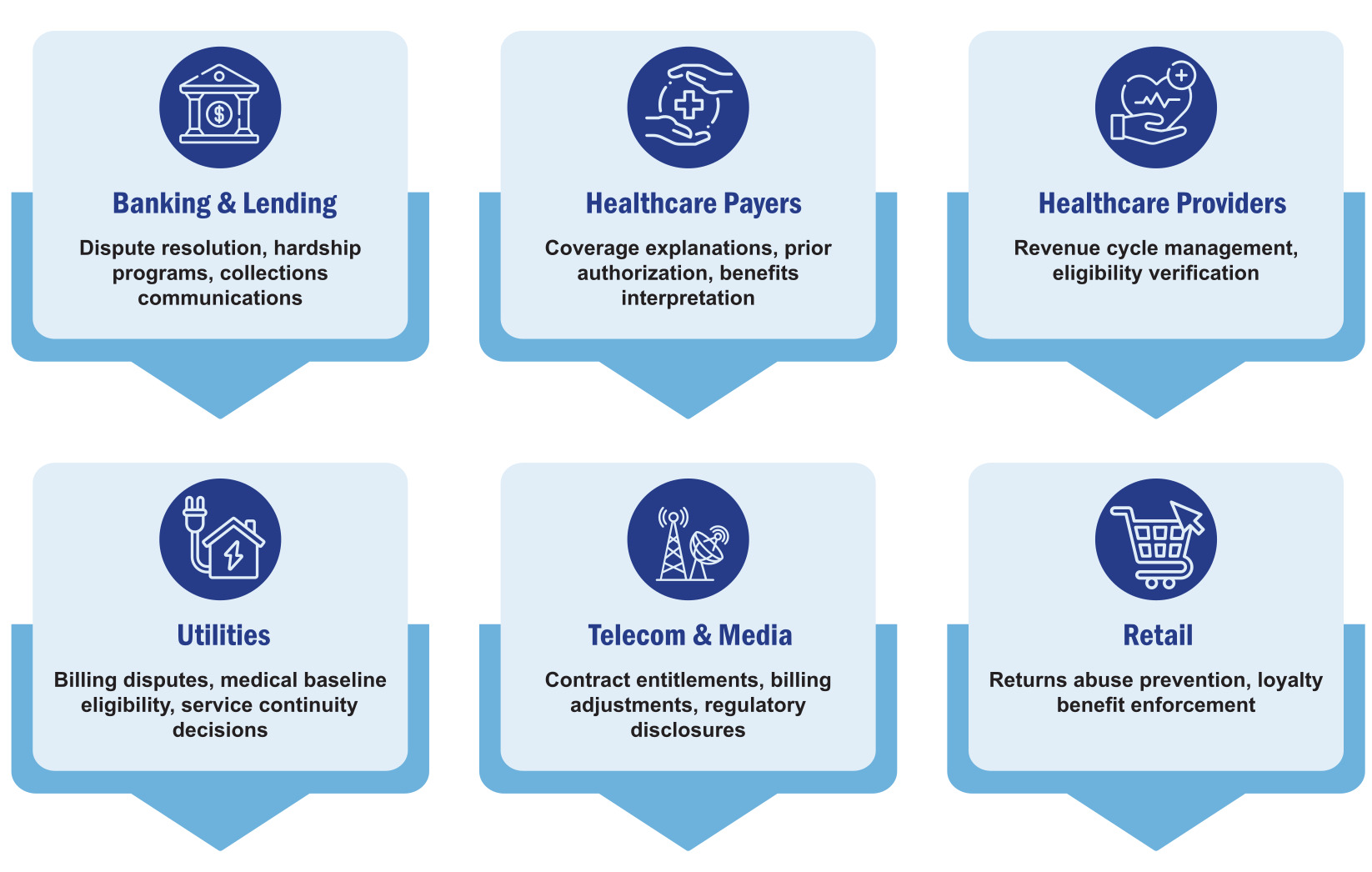

Consider just a few examples across industries:

- Banking & Lending: dispute resolution, hardship programs, collections communications

- Healthcare Payers: coverage explanations, prior authorization, benefits interpretation

- Healthcare Providers: revenue cycle management, eligibility verification

- Utilities: billing disputes, medical baseline eligibility, service continuity decisions

- Telecom & Media: contract entitlements, billing adjustments, regulatory disclosures

- Retail: returns abuse prevention, loyalty benefit enforcement

Across these workflows, how a decision is made and explained matters as much as the outcome itself. Errors rarely self-correct; they cascade—triggering downstream denials, appeals, complaints, and regulatory scrutiny.

Accountability must remain defensible long after execution, to customers, auditors, and regulators reconstructing what happened and why. This is why operations leaders are cautious. In trust-critical environments, AI cannot simply assist; it must be governed at the moment of execution.

If there is a single use case that exposes why the control plane matters, it is prior authorization in healthcare. The complexity isn’t a lack of documentation—coverage rules, clinical policies, and regulatory requirements are deeply codified. The challenge is applicability.

A determination must reconcile benefit coverage rules, medical necessity criteria, plan-specific variations, federal and state regulations, and exception pathways. The risk profile also differs by decision type: an incorrect approval creates financial and audit exposure, while an incorrect denial triggers appeals, parity law scrutiny, and regulatory action.

Providers face their own complexity: documentation burden, inconsistent requirements, and revenue cycle disruption. AI can accelerate retrieval, but without embedded governance to validate applicability, track decision rights, and manage exceptions, it simply accelerates the rate at which incorrect determinations are made.

In a trust-critical workflow like prior authorization, speed without governance amplifies risk rather than reducing it.

Teams doing this work every day know how much judgment and nuance these workflows demand. Any AI that participates here has to reflect that lived expertise, not replace it.

Boards recognize the exposure. Disclosure of material AI risk in S&P 500 filings has surged from roughly one in ten companies to nearly three in four over two years [2].

The question is no longer whether AI creates institutional exposure, but whether governance capabilities exist to manage it.

What COOs Are Really Reacting To

In conversations with COOs, the reaction to AI in operations is not resistance, but restraint.

There is broad recognition that today’s tools are powerful, and that early productivity gains are real. The concern is more fundamental: whether the organization can stand behind AI-enabled decisions once they move from assistance into execution.

Most COOs recognize that their operating model was designed for human judgment, not machine participation. Authority is distributed, exceptions are managed through experience, and accountability is reconstructed after the fact. That model has worked for decades, but it doesn’t scale cleanly when AI becomes part of the decision loop.

What’s emerging is a quiet consensus: until judgment, accountability, and escalation are designed into operations (not layered on afterward), AI will remain bounded to the edges of the business. Not because leaders lack ambition, but because they understand the cost of getting it wrong.

Many teams tell me they’re excited about AI but cautious about crossing the threshold to execution. That hesitation is rational, and it’s why designing the control plane often becomes a shared effort between internal experts and partners who understand both the workflow realities and the AI.

Three Questions Every COO Should Answer Before Scaling AI

Before approving the next phase of AI rollout, every COO should be able to answer these three questions with confidence, not optimism:

If AI is becoming part of operational execution, governance is no longer a support function. It becomes a core operational capability, as essential to sustainable performance as the technology itself.

The emerging differentiator is not who has the most advanced models, but who can design and operate the control plane that governs them. That’s where partners who’ve lived these workflows can help translate what teams already know into something machines can govern.

The Divide That’s Already Forming

AI will not replace operations. It will expose how well operations are governed. This shift marks the most significant operating model redesign since workflow digitization.

The competitive divide ahead isn't between organizations that adopt AI and those that don't. It's between those that can trust AI decisions at the moment of execution and those that cannot.

Without a control plane: pilots multiply, production stalls, exceptions are managed informally, accountability is reconstructed only after failures surface.

With one: knowledge becomes authoritative, judgment is explicit, decisions are traceable by design. This discipline compounds into institutional resilience—not just efficiency.

Remember that silent room where no one could answer what happens when AI fails? The organizations that lead in the next decade will be the ones that treat the control plane as a first-class operational capability, often codesigned and operated with partners who understand how to translate your rules, policies, and accountability into machine-safe execution.

And if you’re navigating these questions now, I’d welcome a conversation—let’s build the control plane your operations deserve.

References:

- Challapally, A., Pease, C., Raskar, R., & Chari, P. (2025, July). State of AI in business 2025 (v0.1) [Preliminary findings from AI implementation research from Project NANDA]. MLQ.ai.

https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

- Johnston, A. (2025, October 27). Generative AI shows rapid growth but yields mixed results. S&P Global Market Intelligence.

https://www.spglobal.com/market-intelligence/en/news-insights/research/2025/10/generative-ai-shows-rapid-growth-but-yields-mixed-results

Download Now

Download Now